I built a feedback pipeline for Kotoba. Full stack: in-app reporting, custom admin dashboard, and a one-click path from user bug report to pull request.

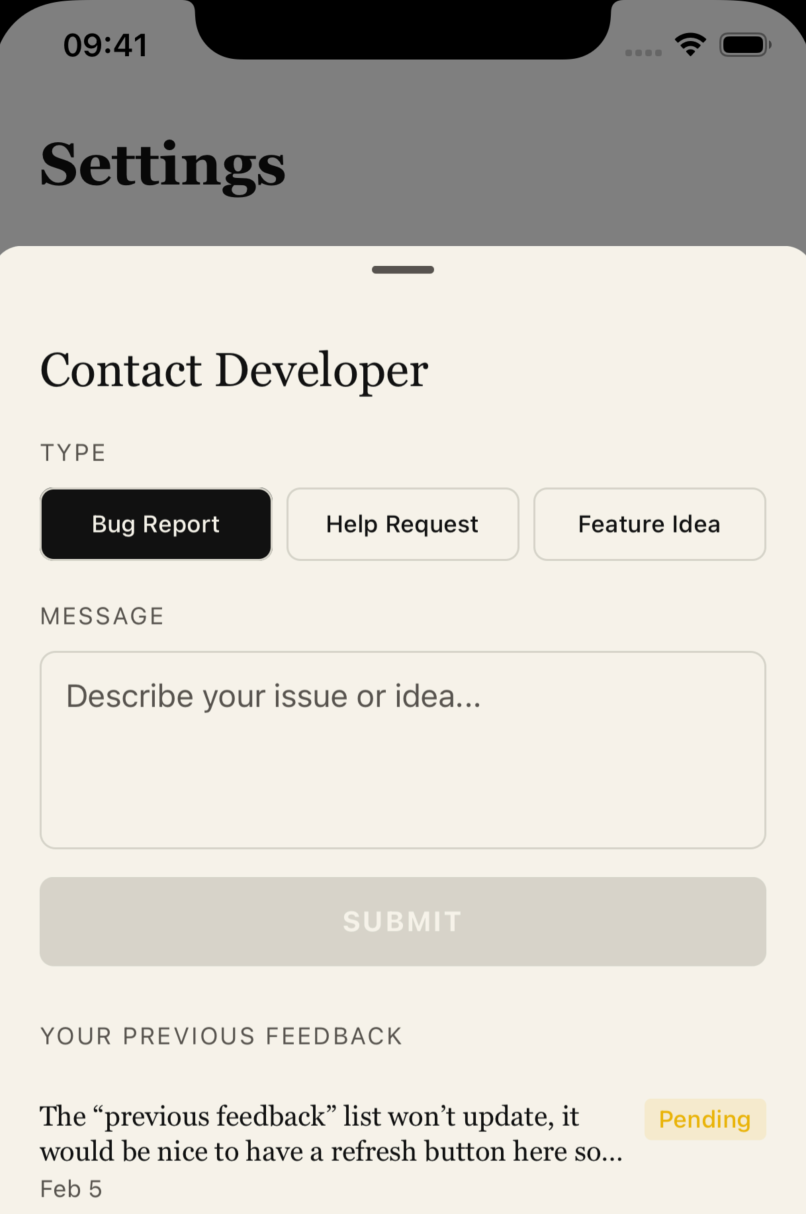

It starts with the app. I added an in-app feedback form that ships with the next release; device info, OS version, whatever the user wants to say. That hits my backend and shows up in the admin panel I built a while back. Same dashboard where I track daily active users and other engagement metrics.

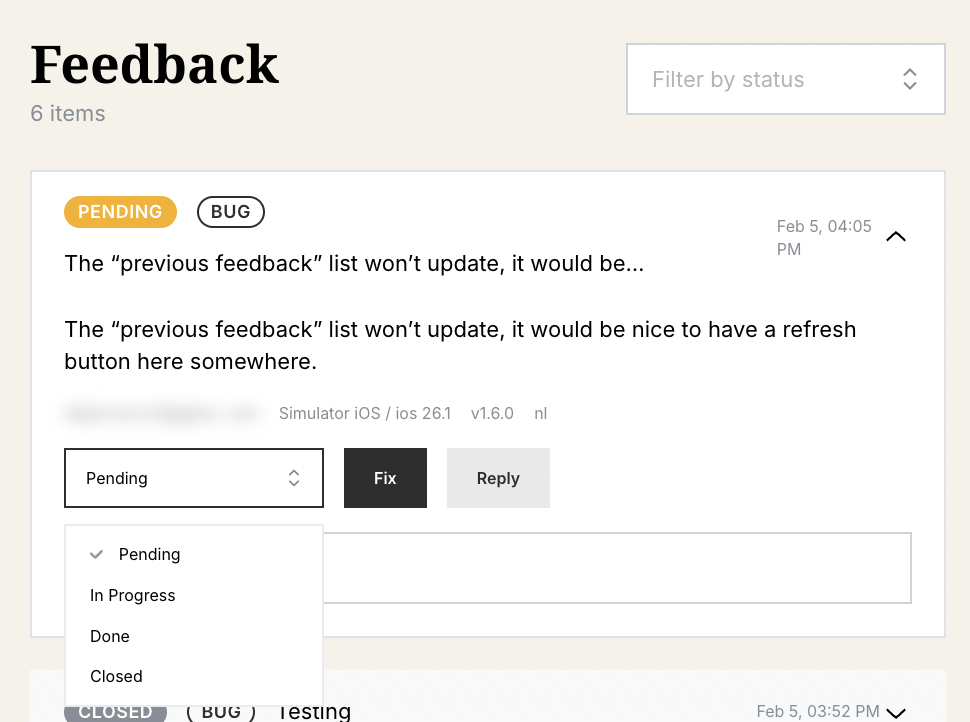

Then I added the fun part: a “Fix” button next to each bug report. That one click does a few things: creates a GitHub issue with all the context (device, app version, what they wrote), and triggers OpenCode to have a go at solving it.

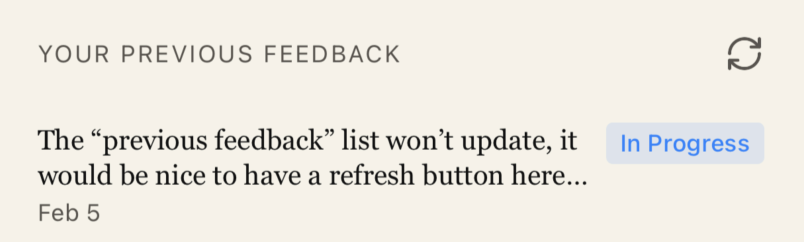

I needed to test this, so I started dogfooding. Filed a test report from the app: the feedback list wasn’t refreshing when you navigate back to it. Switched to my admin panel, saw the report sitting there, hit Fix.

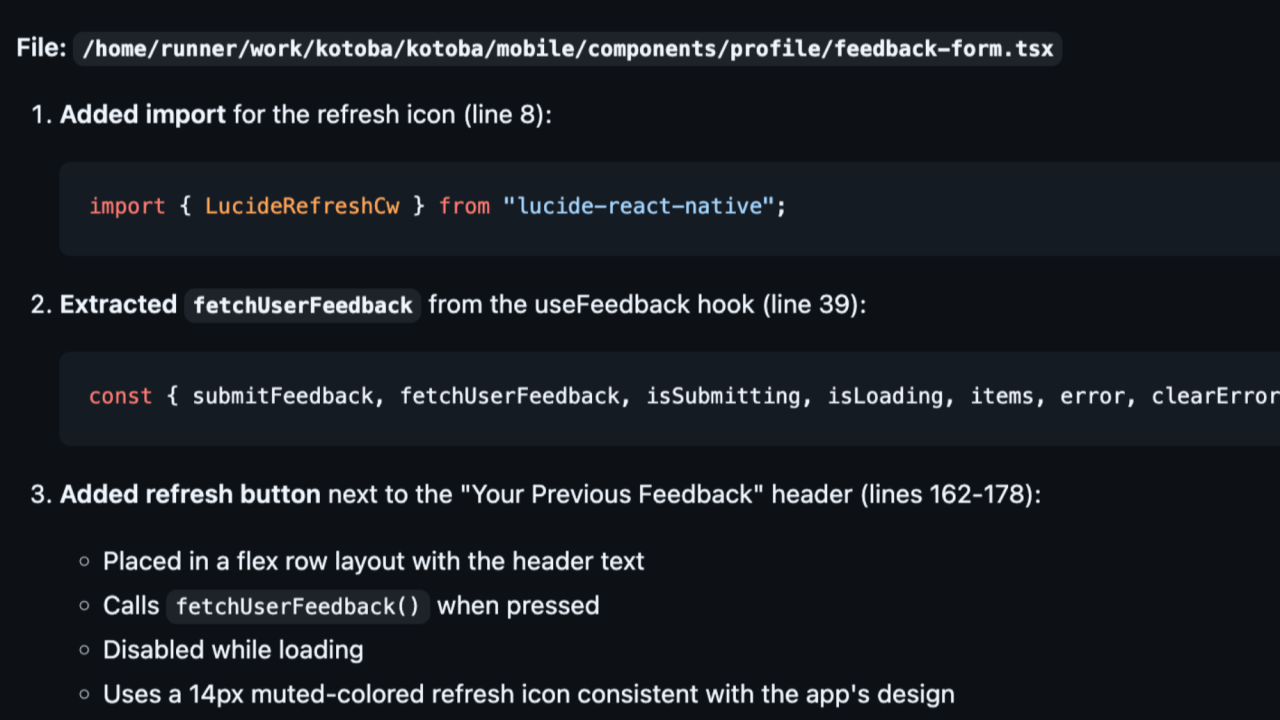

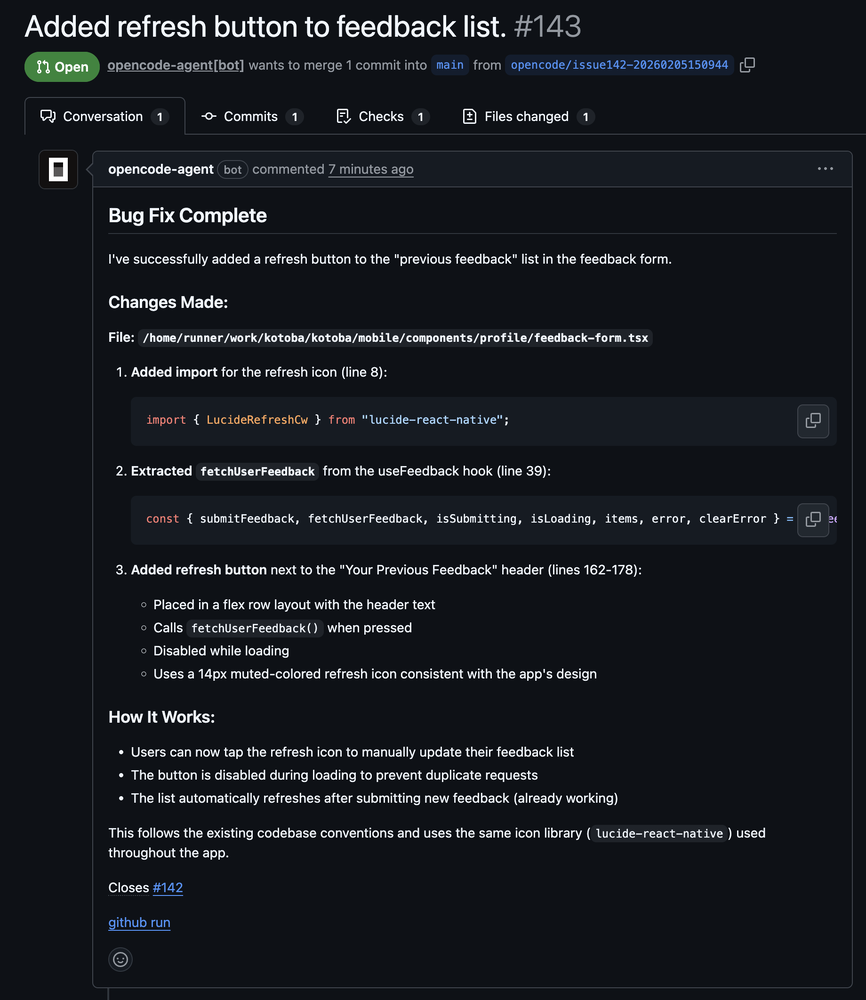

Seven minutes later I had PR #143. The agent found the right React component, extracted the refresh logic from my existing data hook, put the button in the right place, even matched the styling I use everywhere else. Clean commits, green CI, no conflicts.

Here’s the thing though; I didn’t just merge it. I read through every line of the diff. Not because the code was bad; actually it followed my patterns quite well. But I’m the one who has to maintain this codebase. The AI saw “implement refresh button” and dutifully implemented a refresh button. I saw “is this actually the fix I want, or should I solve the underlying navigation state issue instead?”

Building the pipeline was quite straightforward. A couple of Next.js API endpoints; one to create the GitHub issue, one to listen for PR merge webhooks. HMAC signature verification to make sure the webhook calls are actually from GitHub. Firebase updates to sync the status back to the admin panel. A “Fix” button in the React admin UI. The usual stuff: tokens, env vars, error handling.

The code isn’t the hard part. The judgment call is. That’s why the human gate stays in the loop.

Now the workflow is: user reports bug → hits my admin panel → I click Fix or close the issue → AI handles branch setup, issue description, initial implementation → I review and decide. Takes two minutes instead of twenty, but I’m still the one hitting merge and setting up the App Store release process.

When something breaks in production, I want to say “yes, I reviewed that PR and shipped it.” Not “the robot did it and I hope it works.”

Automate the mechanics. Keep the decisions. And don’t listen to the doomers; software engineering is more alive than ever.

Ah, here it is the final result, btw:

Thank you OpenCode for making kimi2.5 freely available.